Research essays and executable reference implementations for applied AI, systems architecture, and production data platforms.

Essays → harveygill.substack.com

Code → here.

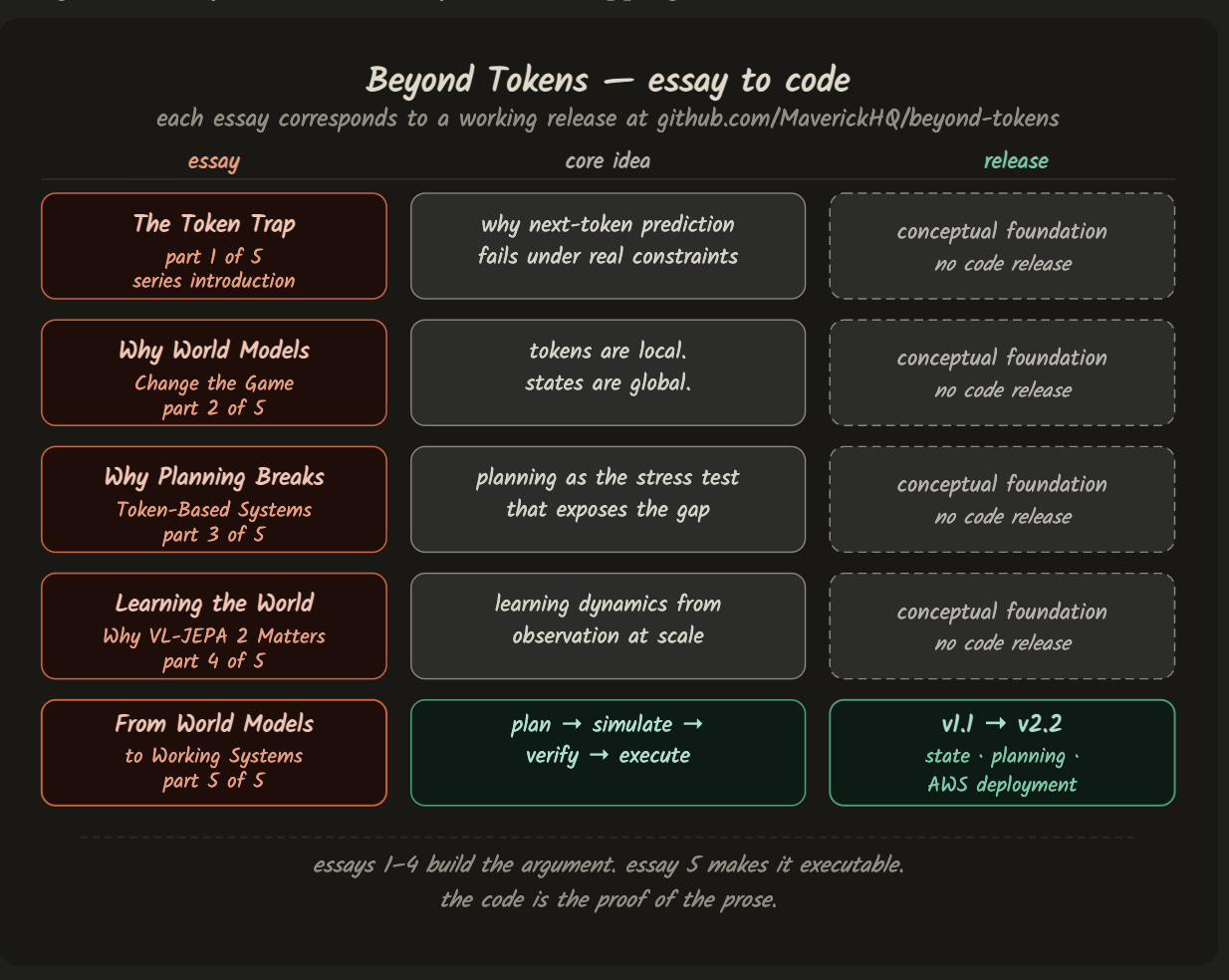

Why world models matter — and why token-based AI fails when decisions must persist over time.

The core argument: modern AI failures are almost never the result of bad models. They are almost always architectural mismatches. The alternative: systems built around explicit world models with persistent state, defined dynamics, and verifiable transitions.

Essays: harveygill.substack.com/p/beyond-tokens

Code: MaverickHQ/beyond-tokens

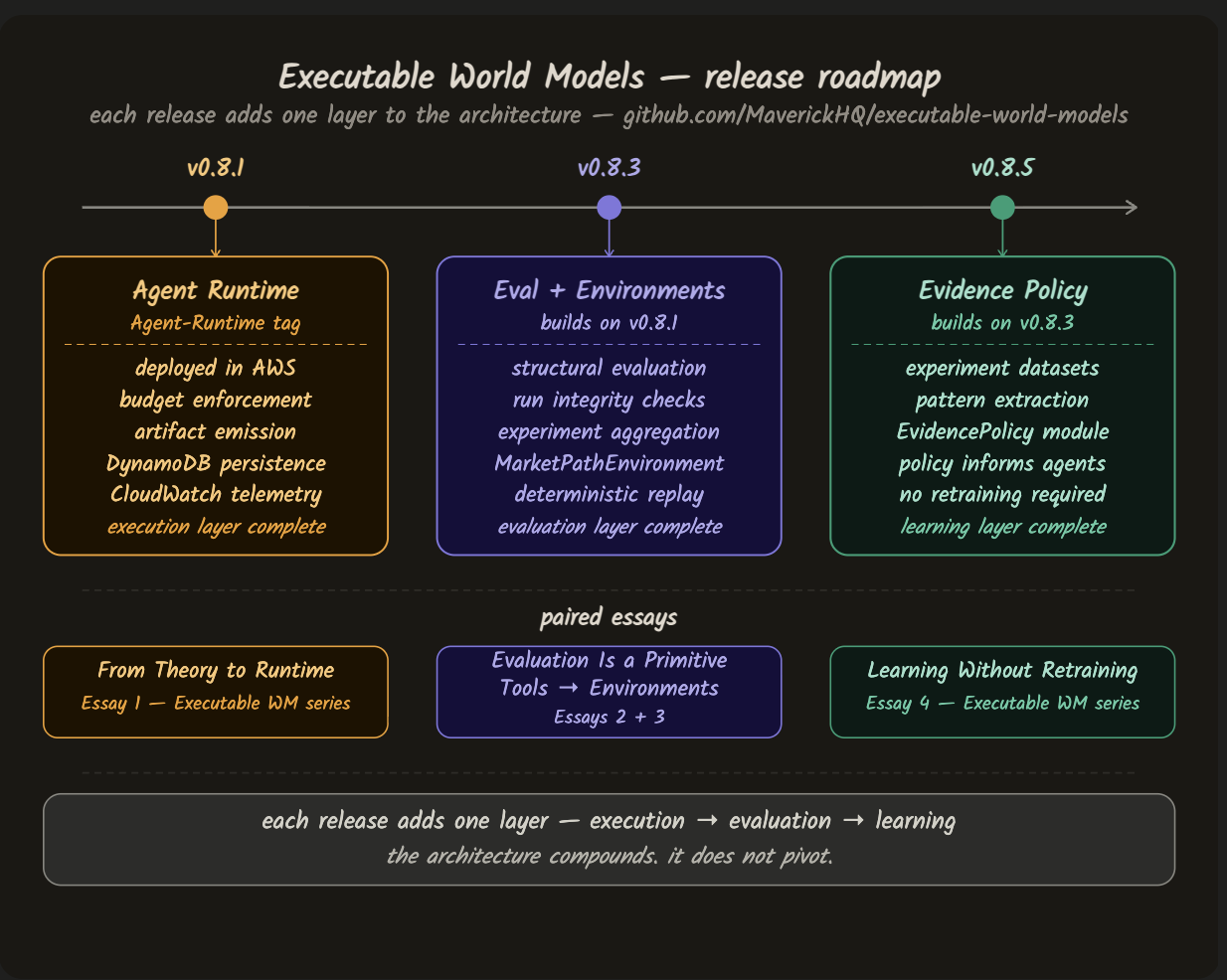

How to build AI systems that can act reliably — deployed, evaluated, and capable of improving from their own experiments.

Built layer by layer: runtime → evaluation → environments → architecture → learning. Each essay mirrors a versioned codebase milestone.

Essays: harveygill.substack.com/p/executable-world-models

Code: MaverickHQ/executable-world-models

The architecture assembled across the series: tokens → models → agents → constraints → artifacts → evaluation → experiments → environments → evidence → policy

Harvey Gill — architect, developer, applied AI practitioner, technical writer.

Large-scale systems design, production data platforms, and applied AI in operational environments. The work here is pragmatic: how systems are actually built and operated, where theory breaks down under real constraints, and what it takes to make AI trustworthy enough to act.

Substack: harveygill.substack.com

LinkedIn: linkedin.com/in/gillharvey

Ideas first. Executable artefacts that make them concrete.